News

Drones For Accident Reconstruction

Drones have become a key tool for crash-scene investigation. Find out how to drone map a crash site and what insights can be collected from 3D models. ... Read More

Drones are an important tool for crash-scene investigation;

Unmanned aircraft collect accurate data quickly and easily, helping to clear the crash site quicker and improve safety;

Drones gather evidence in less than 30 minutes, compared to multiple hours using traditional methods;

Learn how to drone map an accident site, including how to create point clouds and 3D models;

Use DJI drones for accident reconstruction, including the mapping drone, the Phantom 4 RTK.

Drones have become a key tool for crash-scene investigation, helping to collect accurate data quicker and easier than traditional methods.

Deploying UAS for accident reconstruction cuts down the evidence-gathering process - taking less than 30 minutes for a job that used to take three or four hours.

In just a single flight, collect hundreds of images to create accurate and detailed 2D maps and 3D models for thorough accident investigation.

"Utilising UAS for crash-scene reconstruction can significantly reduce the data collection time at a crash scene, resulting in shorter road closure times and officer on-scene times," concludes Johns Hopkins Applied Physics Laboratory.

Drone Mapping A Crash Site

So, how do you drone map a crash scene and what insights can be obtained from this aerial data?

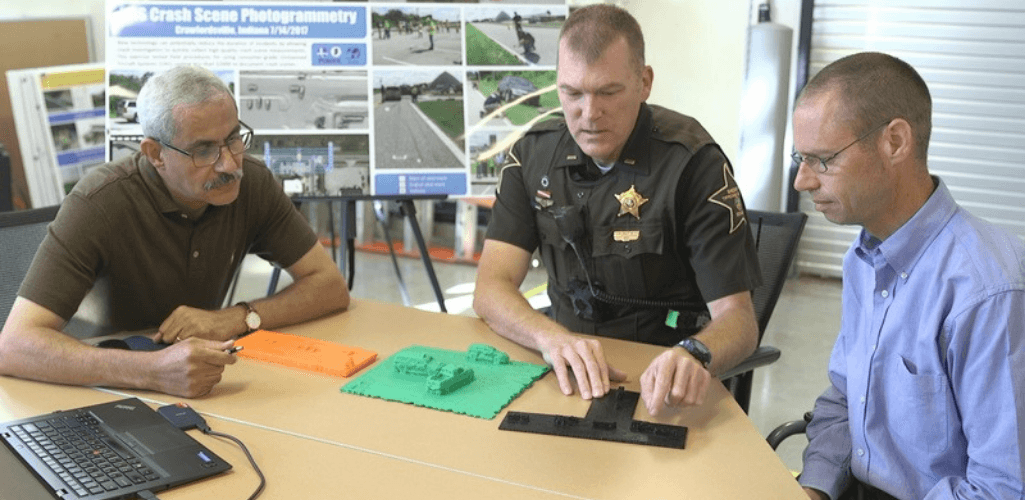

At DJI's flagship AirWorks 2020 conference, Reza Karamooz, CEO of GRADD CO, gave a step-by-step presentation covering the process.

Step One: Capturing The Evidence

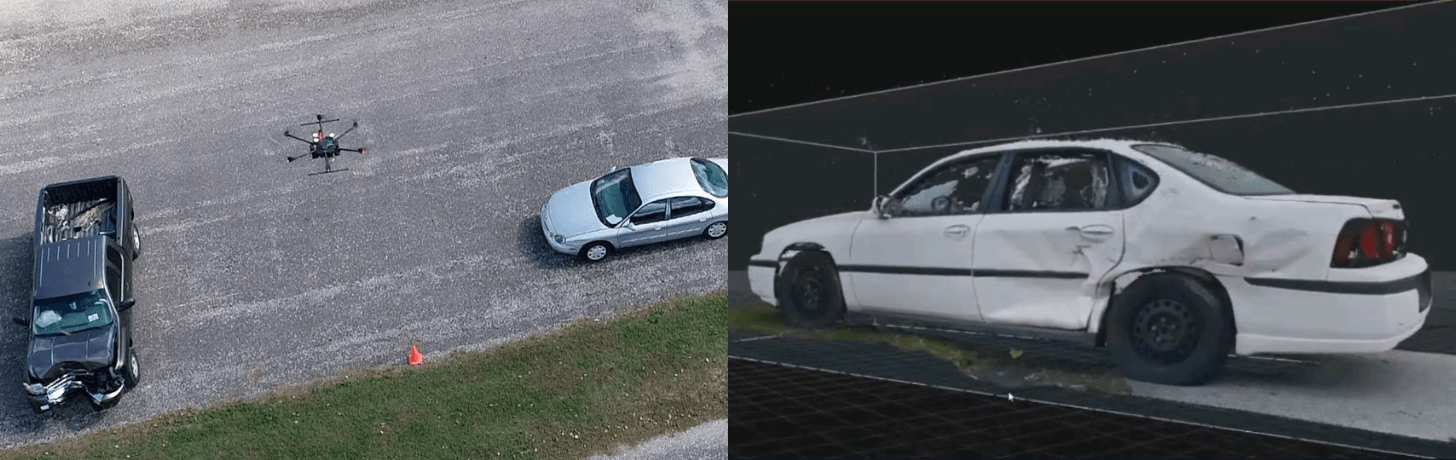

A Chevrolet Impala and a Ford Crown Victoria have collided; the Impala struck on the rear driver side.

First responders need to capture the evidence as quickly and thoroughly as possible.

This includes the vehicles' final resting position, vehicle damage, and collision debris distribution.

For speed, a DJI Phantom 4 Pro was deployed, although other drones in the DJI ecosystem, such as the Phantom 4 RTK, or Matrice drones could be used.

The drone captured several hundred images of the vehicles and the crash scene, following specific flight paths.

Mr Karamooz said: "It took us three to four minutes to orbit each vehicle, and five to six minutes to orbit around the whole scene.

"We collected all of the images we needed in one battery!

"We also flew along the street to get good resolution on the debris which was scattered after the crash. This can be useful for evidence.

"We also flew vertically - as high as about 75 or 80 feet - to capture a beautiful bird's-eye view of the scene.

"Sometimes, if a vertical flight is all you need, you can capture all of the necessary data in a five-minute flight."

For good practice, the team conducted a secondary drone flight to capture more shots, as a back-up.

To complement the drone data, the team used a Nikon D5500 DSLR camera with an 18-55mm VR II Lens - to capture on-the-ground images of both vehicles to help create 3D models of them - and a Leica DISTO S910 laser measurer.

Step Two: Processing The Data

After collecting the drone data, it is time to pull the images into a dedicated piece of photogrammetry software.

RealityCapture is used in this example, but other platforms such as Pix4Dmapper, DroneDeploy, DJI Terra, and AgiSoft can be used.

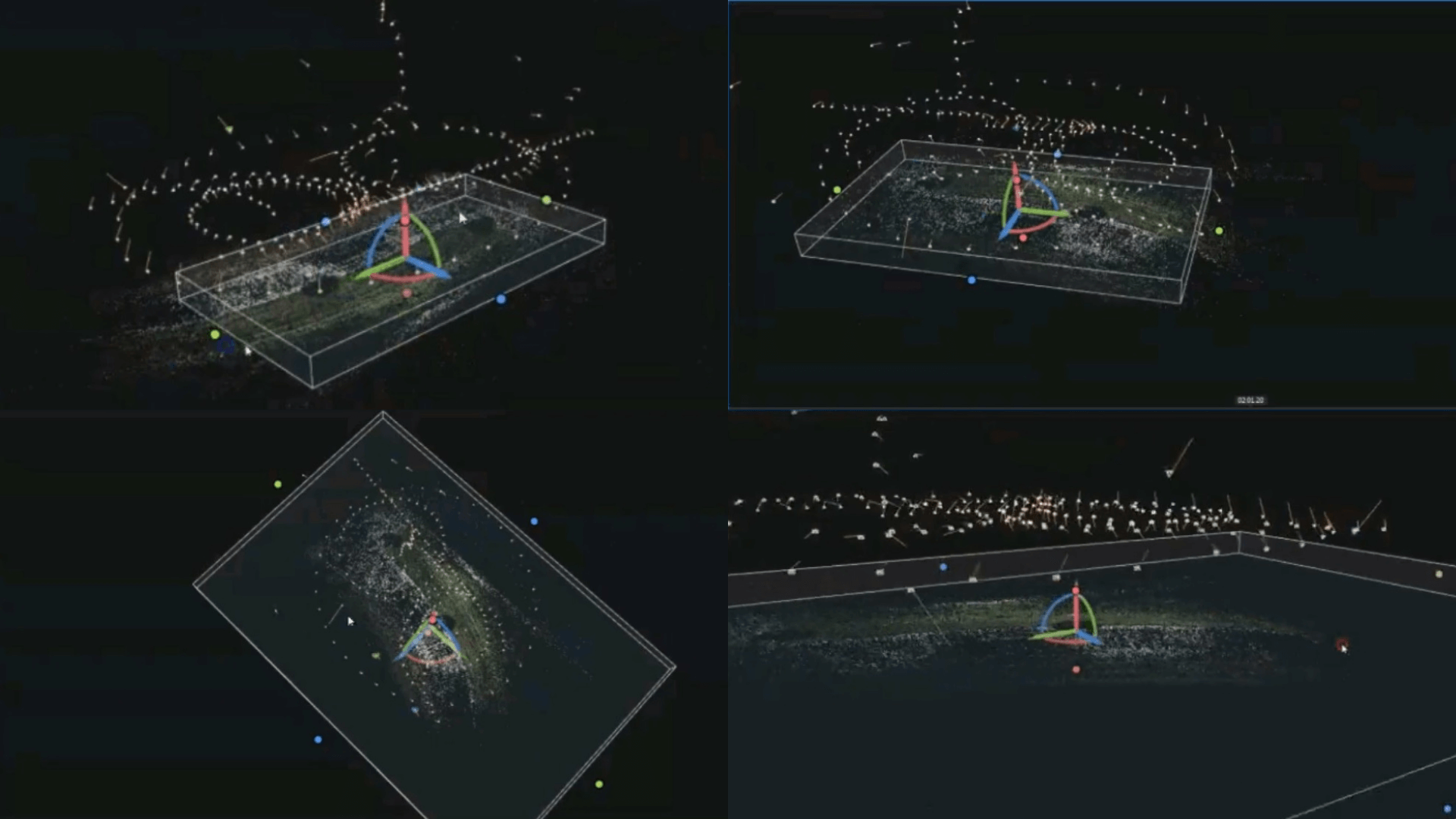

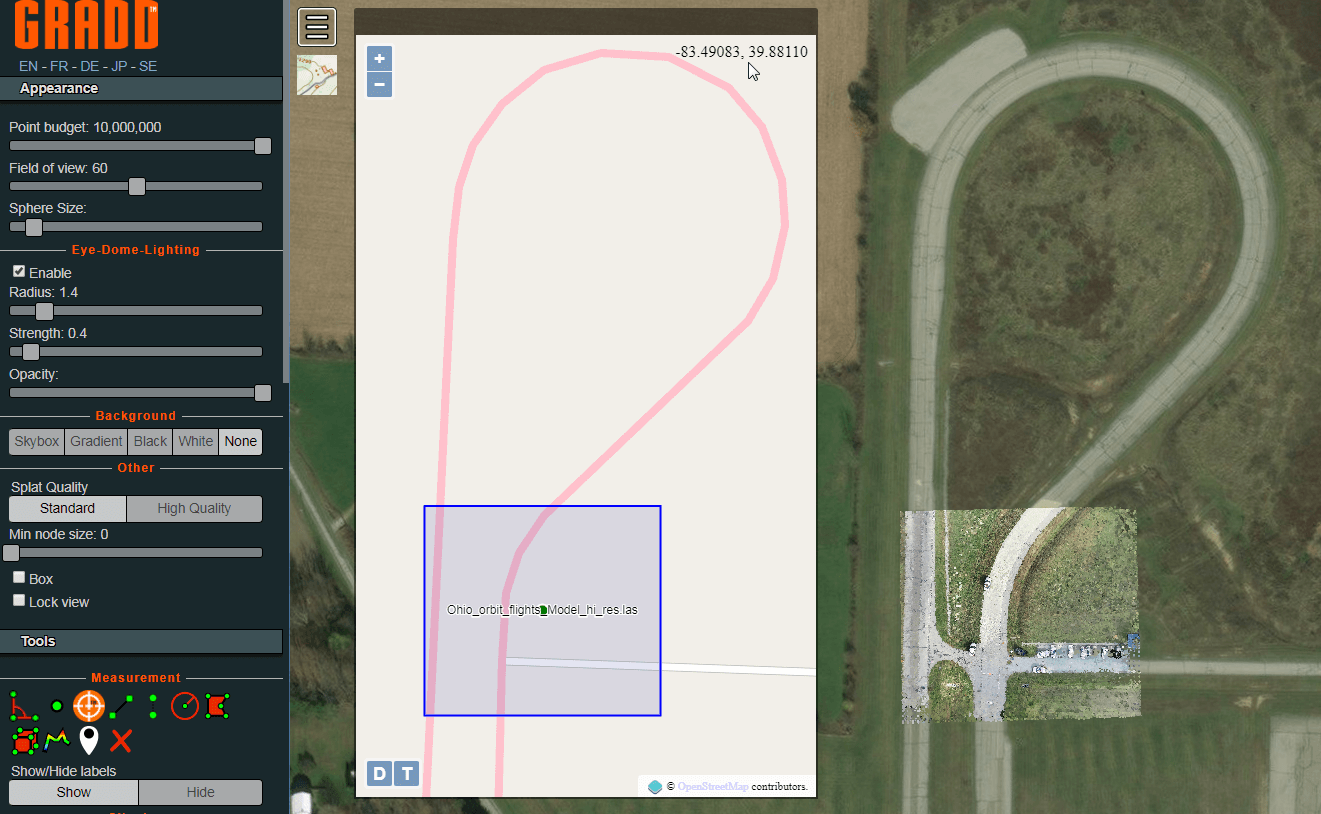

At this point, ground images and laser scans can also be processed, but in this example, only the drone images were used to create this sparse point cloud.

A point cloud is a dataset which represents objects or space, and each point's X, Y, and Z geometric coordinates.

This particular point cloud shows the flight patterns conducted by the drone.

Once the point cloud is formed, draw a box around the area you want for your 3D model.

Mr Karamooz said: "Pick what is important and eliminate what you don't care about because the size of the file could be a lot bigger if you pick areas that aren't important for analysis."

Step Three: Creating A 3D Model

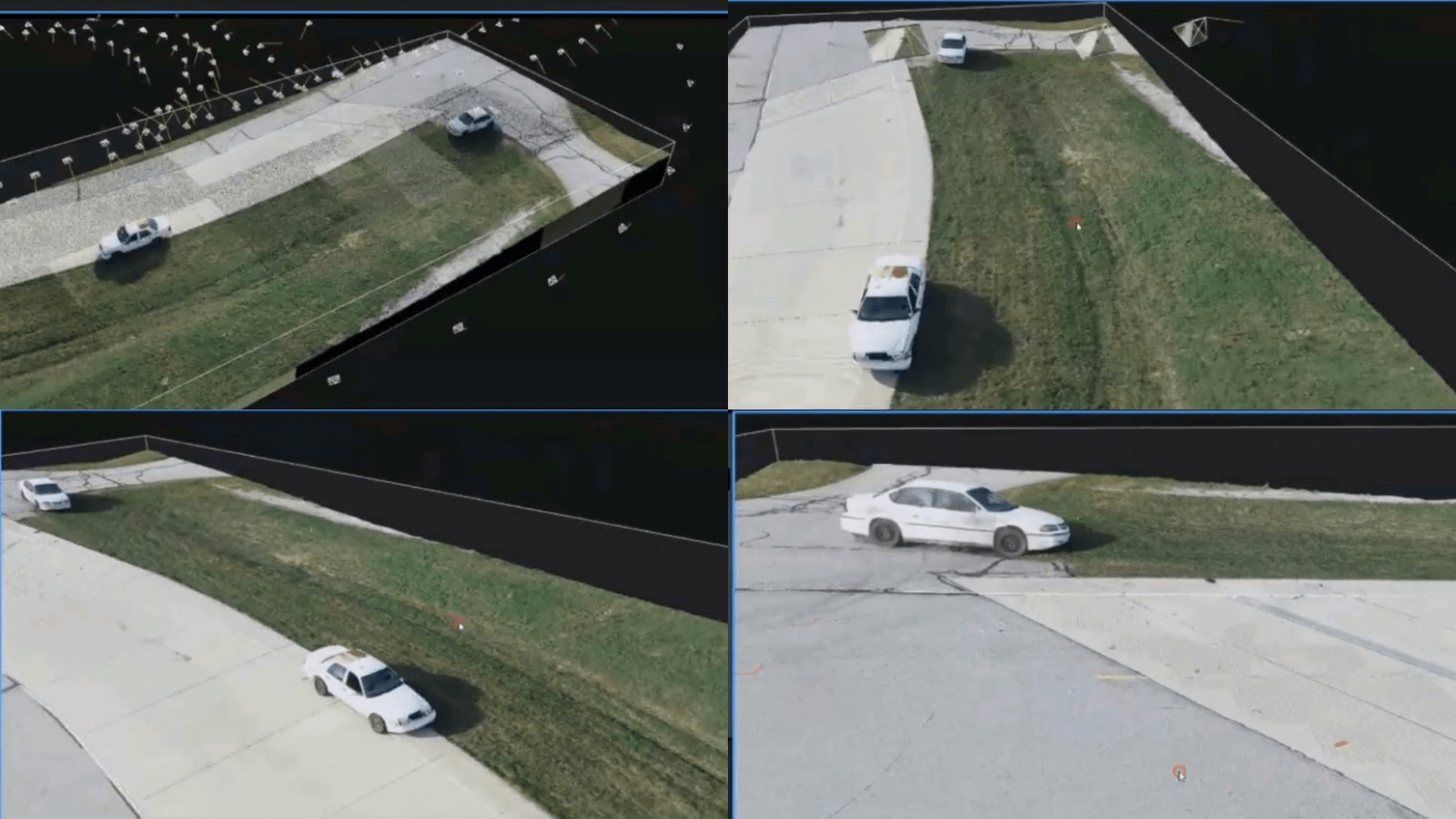

Once you are happy with the selected area, you can turn the point cloud into a 3D model.

This conversion is known as texturing, and you end up with a model like this.

All of a sudden, that strange-looking point cloud transforms into a detailed model, resembling reality, and in this case, the crash scene between the Impala and the Crown Vic.

Mr Karamooz said: "Once the model has been textured, it starts to populate the surface with all of the colours, textures, and everything else that was at the scene. Every detail will be there."

Step Four: Analysing The Data

The dataset allows teams to view and conduct analysis and measurements in 3D.

In this demonstration, GRADD used the LAS3D software suite to view and measure the 3D models, but this type of activity can be conducted with other software packages.

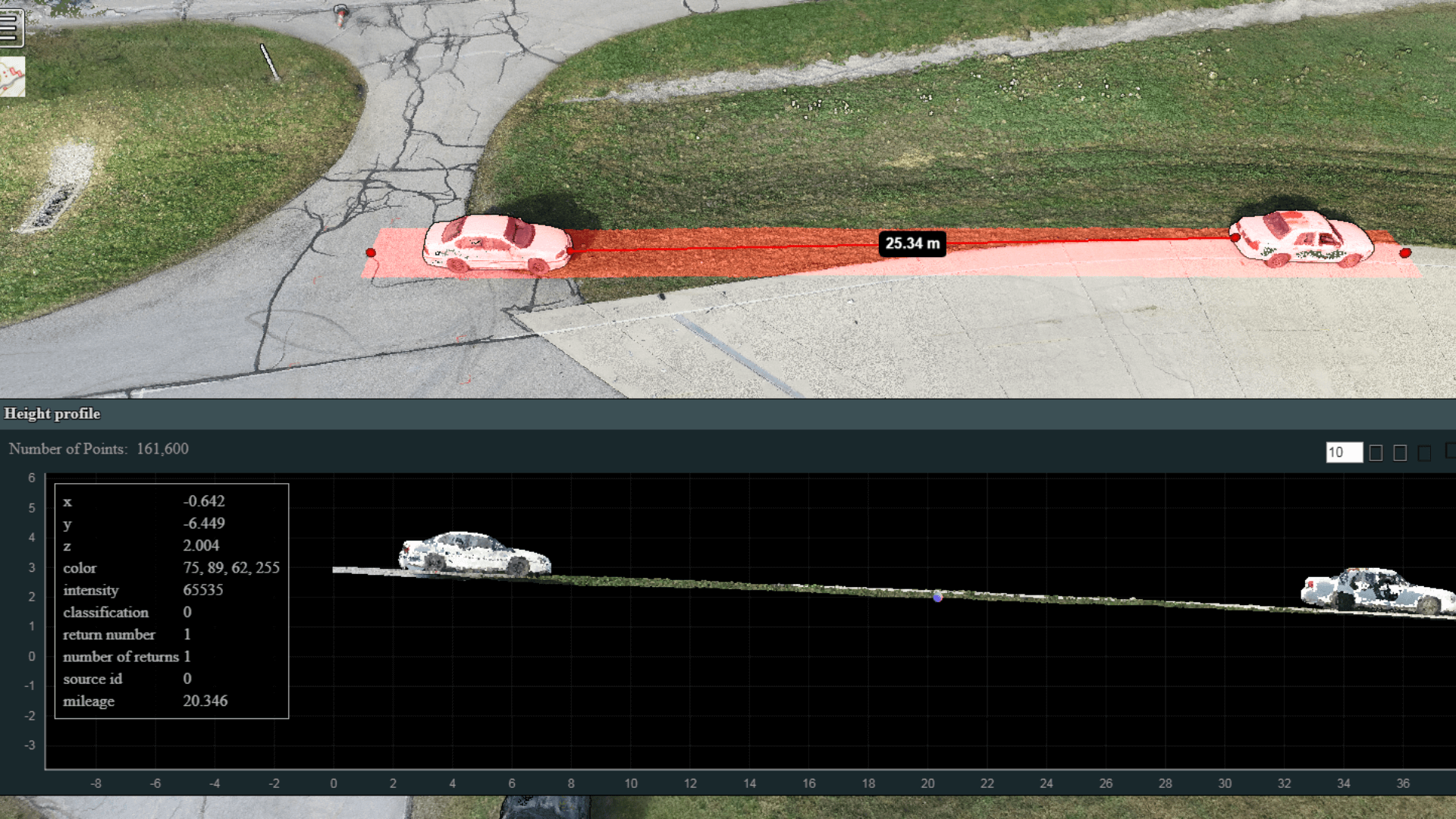

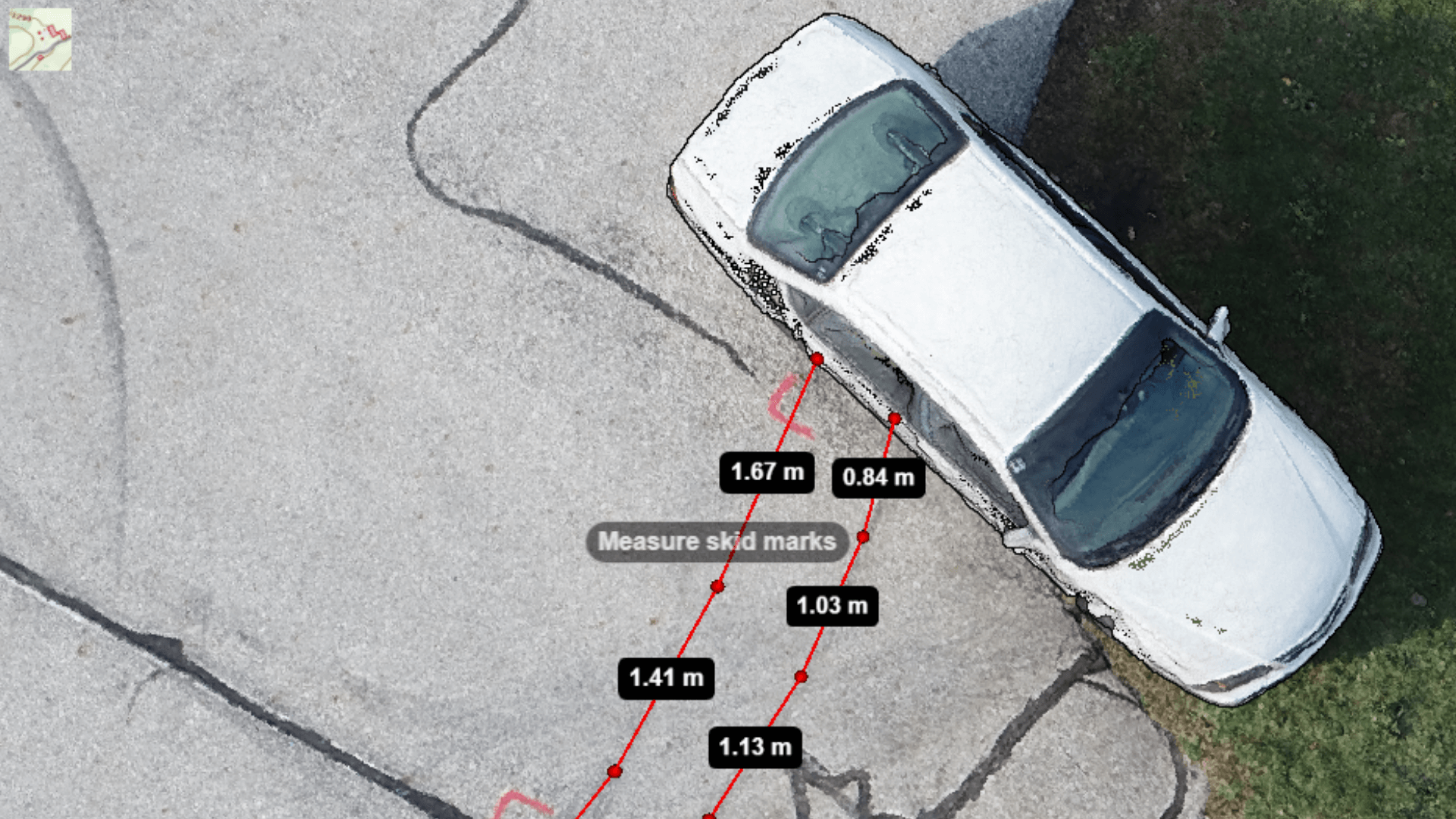

Navigable 3D models allow you to conduct every angle of a scene and gather vital insights such as terrain profile...

...measuring skid marks..

...or distances from each vehicle.

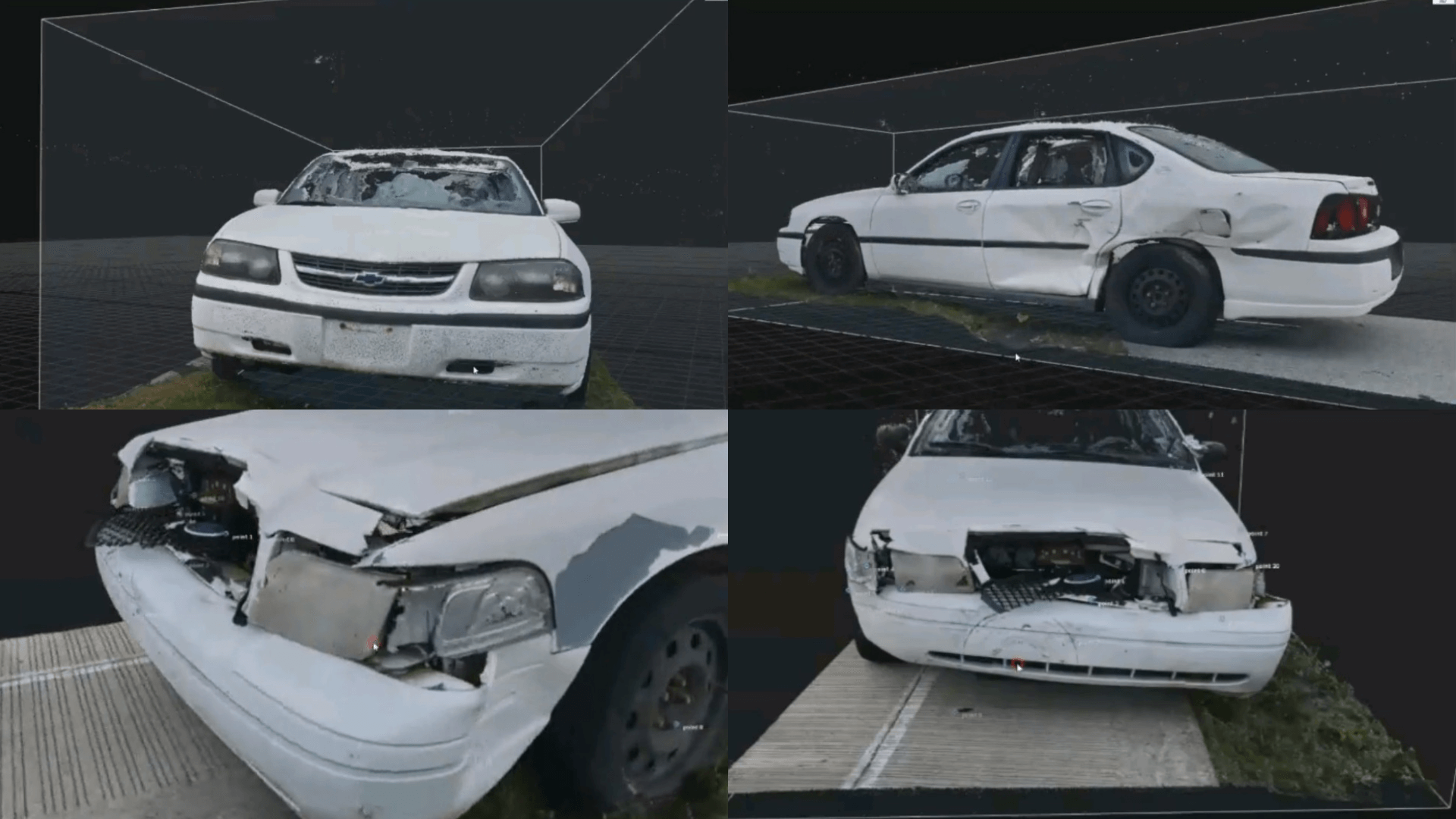

Also, use the data - aided in this case by the DSLR images - to study the vehicle damage up close.

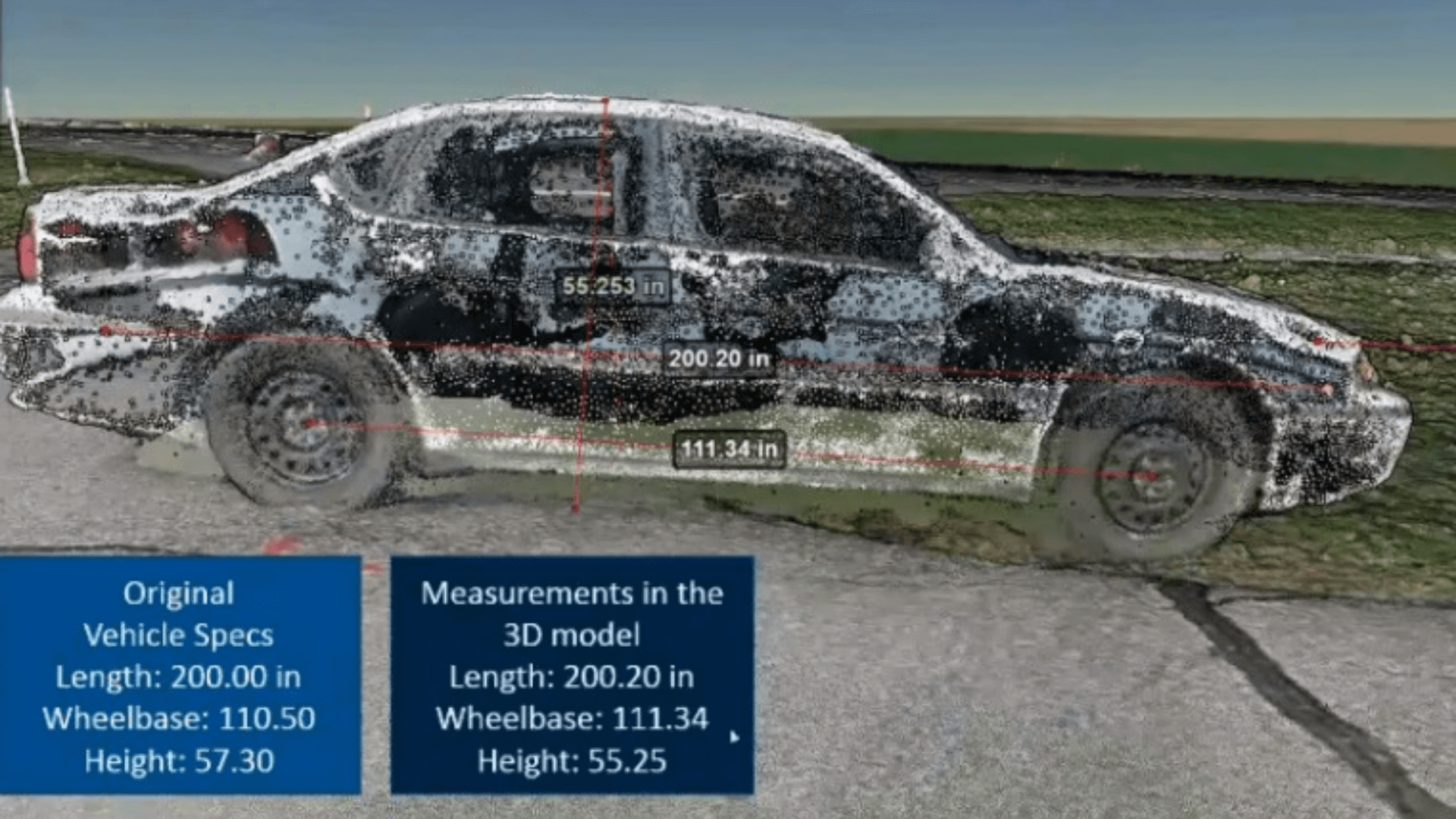

Drone data is incredibly accurate, too.

This image is a recreation of one of the vehicles, created using just the drone data from the crash scene.

It highlights how precise drone data can be, when comparing the original vehicle specs to the measurements in the 3D model.

Original Vehicle Specs | 3D Model Measurements | |

Length | 200.20 in | 200.20 in |

Wheelbase | 110.50 in | 111.34 in |

Height | 57.30 in | 55.25 in |

As the table shows, the accuracies are tight. Yes, there are slight differences, but this could be the result of the crash.

For instance, the vehicle was originally 57.30 inches in height. The drone data shows it to be 55.25 in - but the car is not on level ground, with the left side sitting on grass and being lower down.

The wheelbase measurements were also near identical. Admittedly, the 3D model shows a slightly longer length, but this could have been caused by the impact of the collision.

3D models are a great asset for accident reconstruction.

But drone data can also be used to create useful, detailed and high-resolution 2D maps to provide another layer of detailed analysis.

Mr Karamooz said: "These models were captured minutes after the crash and they are digitally preserved for eternity and every detail is recorded.

"If teams use cloud-based software, it allows the data sets to be accessed remotely and viewed by multiple people simultaneously in any location."

Benefits of Drone Mapping For Crash-scene Investigation

Deploying a drone to reconstruct a crash scene has multiple benefits: Fast and accurate creation of 2D maps and 3D models to enable in-depth analysis.

But how does this pay off in the real world?

As the below list shows, using a drone for crash-scene investigation has multiple benefits:

Re-open roads faster: Faster data collection means that officers can clear the scene quicker and get the road re-open in a shorter space of time. Every four minutes of road closure you get a mile backlog of traffic.

Increased safety: Gathering evidence quickly reduces the risk of on-scene officers being exposed to secondary accidents and injuries. One statistic shows that the likelihood of a secondary crash increases by 3% for every minute the primary incident is ongoing. Traffic crashes and struck-by-incidents are also one of the leading causes of on-duty injuries and deaths for law enforcement, firefighters, and towing and recovery personnel.

More Effective Resource Management: Reducing the time it takes to collect data means that resources can be deployed elsewhere quicker than before.

The advantages are clear.

As Romeo Dursher, DJI's Senior Director of Public Safety Integration, concludes: "If your department is able to use a drone to reopen a road 25-to-40% faster than with traditional methods, that’s a big win.

"That means the public can use that road quicker, it has an economic impact, and it gets you home sooner. Those are big wins."

Drones v Traditional Methods

To truly understand how effective drones are for crash-scene investigation, it is important to compare this technique against traditional methods.

The differences are stark.

Once upon a time emergency service personnel and first responders would complete the task manually.

This involved walking the scene with rules and tape measures, marking Xs and Os on sheets of graph paper.

It was a time-consuming, labour-intensive, and potentially dangerous task - sometimes taking up to six to eight hours.

Then followed a revolutionary step forward - deploying Total Stations.

This electronic, optical instrument - mounted on a tripod - measures vertical and horizontal angles. A popular surveying tool, it integrates an electronic theodolite with an electronic distance meter.

At the time, Total Stations were ground-breaking for accident reconstruction.

They helped to collect data quicker than before, shaving the eight-hour process down to three or four.

But more recently, crash-scene investigators have added a new tool to their arsenal - drones.

Flying UAS cuts the time it takes to map a site and gather evidence down to 20 or 30 minutes.

In fact, drone mapping can take as little as eight minutes.

It means that officers can fly over a site and collect the data in a fraction of the time.

Subsequent 2D maps and 3D models allow for detailed inspection; vital for evidence and understanding the causes of the crash.

Visual drone imagery also provides a bird's eye view of a scene for enhanced situational awareness.

Best Drones For Crash-scene Investigation

Drones in the DJI ecosystem provide suitable and diverse options for mapping a crash scene.

The most suitable option is the DJI Phantom 4 RTK; a next-generation low-altitude mapping platform.

It has a 20MP camera and can capture real-time, centimetre-level positioning data for improved absolute accuracy on image metadata.

The M210 V2 RTK, coupled with an X7 camera (either the 24 mm or 35 mm lens), is another suitable solution.

At a GSD (ground sample distance) of 2 cm, the orthoimages produced can achieve absolute horizontal accuracy of less than 5 cm using DJI Terra as the mapping software.

For a lightweight option, the Mavic 2 Enterprise series drones are engineered with public safety in mind.

HELIGUY.com™ is an industry-leading supplier of commercial DJI drones and has a track record of providing hardware and advice to the emergency services.

With warehouse facilities in Dallas, Texas, USA, and the United Kingdom, HELIGUY.com™ can supply drone pilots and support UAS projects around the world.

HELIGUY.com has in-house DJI™-trained technicians for repairs and custom projects; a CAA-approved drone training team, and offers innovative drone supply options. Contact us for more information.